Examples¶

Classification: Criteo¶

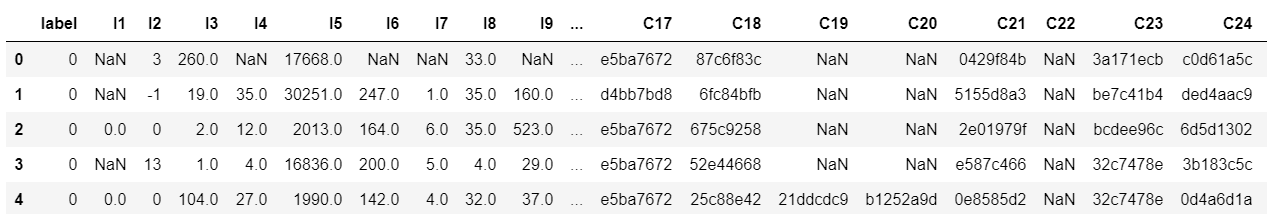

The Criteo Display Ads dataset is for the purpose of predicting ads click-through rate. It has 13 integer features and 26 categorical features where each category has a high cardinality.

In this example,we simply normailize the dense feature between 0 and 1,you can try other transformation technique like log normalization or discretization.Then we use SparseFeat and DenseFeat to generate feature columns for sparse features and dense features.

This example shows how to use DeepFM to solve a simple binary classification task. You can get the demo

data criteo_sample.txt

and run the following codes.

import pandas as pd

from sklearn.metrics import log_loss, roc_auc_score

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import LabelEncoder, MinMaxScaler

from deepctr.models import *

from deepctr.feature_column import SparseFeat, DenseFeat, get_feature_names

if __name__ == "__main__":

data = pd.read_csv('./criteo_sample.txt')

sparse_features = ['C' + str(i) for i in range(1, 27)]

dense_features = ['I' + str(i) for i in range(1, 14)]

data[sparse_features] = data[sparse_features].fillna('-1', )

data[dense_features] = data[dense_features].fillna(0, )

target = ['label']

# 1.Label Encoding for sparse features,and do simple Transformation for dense features

for feat in sparse_features:

lbe = LabelEncoder()

data[feat] = lbe.fit_transform(data[feat])

mms = MinMaxScaler(feature_range=(0, 1))

data[dense_features] = mms.fit_transform(data[dense_features])

# 2.count #unique features for each sparse field,and record dense feature field name

fixlen_feature_columns = [SparseFeat(feat, vocabulary_size=data[feat].max() + 1, embedding_dim=4)

for i, feat in enumerate(sparse_features)] + [DenseFeat(feat, 1, )

for feat in dense_features]

dnn_feature_columns = fixlen_feature_columns

linear_feature_columns = fixlen_feature_columns

feature_names = get_feature_names(linear_feature_columns + dnn_feature_columns)

# 3.generate input data for model

train, test = train_test_split(data, test_size=0.2, random_state=2020)

train_model_input = {name: train[name] for name in feature_names}

test_model_input = {name: test[name] for name in feature_names}

# 4.Define Model,train,predict and evaluate

model = DeepFM(linear_feature_columns, dnn_feature_columns, task='binary')

model.compile("adam", "binary_crossentropy",

metrics=['binary_crossentropy'], )

history = model.fit(train_model_input, train[target].values,

batch_size=256, epochs=10, verbose=2, validation_split=0.2, )

pred_ans = model.predict(test_model_input, batch_size=256)

print("test LogLoss", round(log_loss(test[target].values, pred_ans), 4))

print("test AUC", round(roc_auc_score(test[target].values, pred_ans), 4))

Classification: Criteo with feature hashing on the fly¶

This example shows how to use DeepFM to solve a simple binary classification task using feature hashing. You can get

the demo data criteo_sample.txt

and run the following codes.

import pandas as pd

from sklearn.metrics import log_loss, roc_auc_score

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import MinMaxScaler

from deepctr.models import DeepFM

from deepctr.feature_column import SparseFeat, DenseFeat, get_feature_names

if __name__ == "__main__":

data = pd.read_csv('./criteo_sample.txt')

sparse_features = ['C' + str(i) for i in range(1, 27)]

dense_features = ['I' + str(i) for i in range(1, 14)]

data[sparse_features] = data[sparse_features].fillna('-1', )

data[dense_features] = data[dense_features].fillna(0, )

target = ['label']

# 1.do simple Transformation for dense features

mms = MinMaxScaler(feature_range=(0, 1))

data[dense_features] = mms.fit_transform(data[dense_features])

# 2.set hashing space for each sparse field,and record dense feature field name

fixlen_feature_columns = [SparseFeat(feat, vocabulary_size=1000, embedding_dim=4, use_hash=True, dtype='string')

# since the input is string

for feat in sparse_features] + [DenseFeat(feat, 1, )

for feat in dense_features]

linear_feature_columns = fixlen_feature_columns

dnn_feature_columns = fixlen_feature_columns

feature_names = get_feature_names(linear_feature_columns + dnn_feature_columns, )

# 3.generate input data for model

train, test = train_test_split(data, test_size=0.2, random_state=2020)

train_model_input = {name: train[name] for name in feature_names}

test_model_input = {name: test[name] for name in feature_names}

# 4.Define Model,train,predict and evaluate

model = DeepFM(linear_feature_columns, dnn_feature_columns, task='binary')

model.compile("adam", "binary_crossentropy",

metrics=['binary_crossentropy'], )

history = model.fit(train_model_input, train[target].values,

batch_size=256, epochs=10, verbose=2, validation_split=0.2, )

pred_ans = model.predict(test_model_input, batch_size=256)

print("test LogLoss", round(log_loss(test[target].values, pred_ans), 4))

print("test AUC", round(roc_auc_score(test[target].values, pred_ans), 4))

Regression: Movielens¶

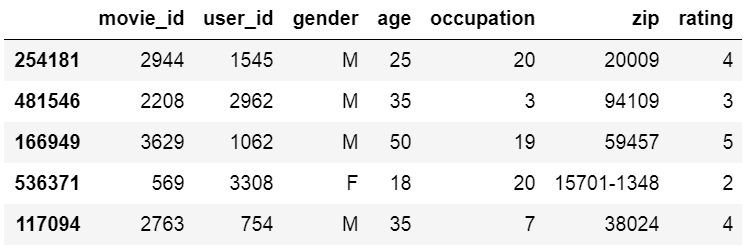

The MovieLens data has been used for personalized tag recommendation,which contains 668, 953 tag applications of users on movies. Here is a small fraction of data include only sparse field.

This example shows how to use DeepFM to solve a simple binary regression task. You can get the demo data

movielens_sample.txt and run the

following codes.

import pandas as pd

from sklearn.metrics import mean_squared_error

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import LabelEncoder

from deepctr.models import DeepFM

from deepctr.feature_column import SparseFeat, get_feature_names

if __name__ == "__main__":

data = pd.read_csv("./movielens_sample.txt")

sparse_features = ["movie_id", "user_id",

"gender", "age", "occupation", "zip"]

target = ['rating']

# 1.Label Encoding for sparse features,and do simple Transformation for dense features

for feat in sparse_features:

lbe = LabelEncoder()

data[feat] = lbe.fit_transform(data[feat])

# 2.count #unique features for each sparse field

fixlen_feature_columns = [SparseFeat(feat, data[feat].max() + 1, embedding_dim=4)

for feat in sparse_features]

linear_feature_columns = fixlen_feature_columns

dnn_feature_columns = fixlen_feature_columns

feature_names = get_feature_names(linear_feature_columns + dnn_feature_columns)

# 3.generate input data for model

train, test = train_test_split(data, test_size=0.2, random_state=2020)

train_model_input = {name: train[name].values for name in feature_names}

test_model_input = {name: test[name].values for name in feature_names}

# 4.Define Model,train,predict and evaluate

model = DeepFM(linear_feature_columns, dnn_feature_columns, task='regression')

model.compile("adam", "mse", metrics=['mse'], )

history = model.fit(train_model_input, train[target].values,

batch_size=256, epochs=10, verbose=2, validation_split=0.2, )

pred_ans = model.predict(test_model_input, batch_size=256)

print("test MSE", round(mean_squared_error(

test[target].values, pred_ans), 4))

Multi-value Input : Movielens¶

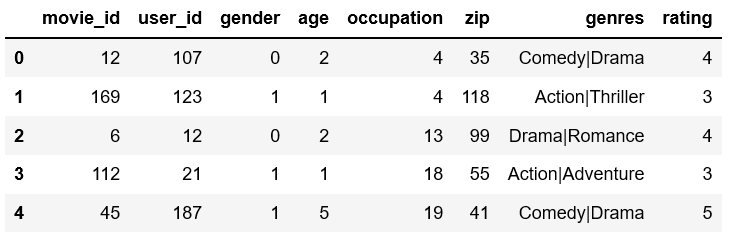

The MovieLens data has been used for personalized tag recommendation,which contains 668, 953 tag applications of users on movies. Here is a small fraction of data include sparse fields and a multivalent field.

There are 2 additional steps to use DeepCTR with sequence feature input.

Generate the paded and encoded sequence feature of sequence input feature(value 0 is for padding).

Generate config of sequence feature with VarLenSparseFeat

For more details about padding, maxlen, embedding sharing, DIN history fields, DSIN session fields, and TFRecord inputs, see the Sequence Feature Cookbook.

This example shows how to use DeepFM with sequence(multi-value) feature. You can get the demo data

movielens_sample.txt and run the

following codes.

import numpy as np

import pandas as pd

from sklearn.preprocessing import LabelEncoder

from tensorflow.keras.preprocessing.sequence import pad_sequences

from deepctr.models import DeepFM

from deepctr.feature_column import SparseFeat, VarLenSparseFeat, get_feature_names

def split(x):

key_ans = x.split('|')

for key in key_ans:

if key not in key2index:

# Notice : input value 0 is a special "padding",so we do not use 0 to encode valid feature for sequence input

key2index[key] = len(key2index) + 1

return list(map(lambda x: key2index[x], key_ans))

if __name__ == "__main__":

data = pd.read_csv("./movielens_sample.txt")

sparse_features = ["movie_id", "user_id",

"gender", "age", "occupation", "zip", ]

target = ['rating']

# 1.Label Encoding for sparse features,and process sequence features

for feat in sparse_features:

lbe = LabelEncoder()

data[feat] = lbe.fit_transform(data[feat])

# preprocess the sequence feature

key2index = {}

genres_list = list(map(split, data['genres'].values))

genres_length = np.array(list(map(len, genres_list)))

max_len = max(genres_length)

# Notice : padding=`post`

genres_list = pad_sequences(genres_list, maxlen=max_len, padding='post', )

# 2.count #unique features for each sparse field and generate feature config for sequence feature

fixlen_feature_columns = [SparseFeat(feat, data[feat].max() + 1, embedding_dim=4)

for feat in sparse_features]

use_weighted_sequence = False

if use_weighted_sequence:

varlen_feature_columns = [VarLenSparseFeat(SparseFeat('genres', vocabulary_size=len(

key2index) + 1, embedding_dim=4), maxlen=max_len, combiner='mean',

weight_name='genres_weight')] # Notice : value 0 is for padding for sequence input feature

else:

varlen_feature_columns = [VarLenSparseFeat(SparseFeat('genres', vocabulary_size=len(

key2index) + 1, embedding_dim=4), maxlen=max_len, combiner='mean',

weight_name=None)] # Notice : value 0 is for padding for sequence input feature

linear_feature_columns = fixlen_feature_columns + varlen_feature_columns

dnn_feature_columns = fixlen_feature_columns + varlen_feature_columns

feature_names = get_feature_names(linear_feature_columns + dnn_feature_columns)

# 3.generate input data for model

model_input = {name: data[name] for name in feature_names} #

model_input["genres"] = genres_list

model_input["genres_weight"] = np.random.randn(data.shape[0], max_len, 1)

# 4.Define Model,compile and train

model = DeepFM(linear_feature_columns, dnn_feature_columns, task='regression')

model.compile("adam", "mse", metrics=['mse'], )

history = model.fit(model_input, data[target].values,

batch_size=256, epochs=10, verbose=2, validation_split=0.2, )

Multi-value Input : Movielens with feature hashing on the fly¶

import numpy as np

import pandas as pd

from tensorflow.keras.preprocessing.sequence import pad_sequences

from deepctr.feature_column import SparseFeat, VarLenSparseFeat, get_feature_names

from deepctr.models import DeepFM

if __name__ == "__main__":

data = pd.read_csv("./movielens_sample.txt")

sparse_features = ["movie_id", "user_id",

"gender", "age", "occupation", "zip", ]

data[sparse_features] = data[sparse_features].astype(str)

target = ['rating']

# 1.Use hashing encoding on the fly for sparse features,and process sequence features

genres_list = list(map(lambda x: x.split('|'), data['genres'].values))

genres_length = np.array(list(map(len, genres_list)))

max_len = max(genres_length)

# Notice : padding=`post`

genres_list = pad_sequences(genres_list, maxlen=max_len, padding='post', dtype=object, value=0).astype(str)

# 2.set hashing space for each sparse field and generate feature config for sequence feature

fixlen_feature_columns = [SparseFeat(feat, data[feat].nunique() * 5, embedding_dim=4, use_hash=True, dtype='string')

for feat in sparse_features]

varlen_feature_columns = [

VarLenSparseFeat(SparseFeat('genres', vocabulary_size=100, embedding_dim=4, use_hash=True, dtype="string"),

maxlen=max_len, combiner='mean',

)] # Notice : value 0 is for padding for sequence input feature

linear_feature_columns = fixlen_feature_columns + varlen_feature_columns

dnn_feature_columns = fixlen_feature_columns + varlen_feature_columns

feature_names = get_feature_names(linear_feature_columns + dnn_feature_columns)

# 3.generate input data for model

model_input = {name: data[name] for name in feature_names}

model_input['genres'] = genres_list

# 4.Define Model,compile and train

model = DeepFM(linear_feature_columns, dnn_feature_columns, task='regression')

model.compile("adam", "mse", metrics=['mse'], )

history = model.fit(model_input, data[target].values,

batch_size=256, epochs=10, verbose=2, validation_split=0.2, )

Hash Layer with pre-defined key-value vocabulary¶

This examples how to use pre-defined key-value vocabulary in Hash Layer.movielens_age_vocabulary.csv stores the

key-value mapping for age feature.

from deepctr.models import DeepFM

from deepctr.feature_column import SparseFeat, VarLenSparseFeat, get_feature_names

import numpy as np

import pandas as pd

from tensorflow.keras.preprocessing.sequence import pad_sequences

try:

import tensorflow.compat.v1 as tf

except ImportError as e:

import tensorflow as tf

if __name__ == "__main__":

data = pd.read_csv("./movielens_sample.txt")

sparse_features = ["movie_id", "user_id",

"gender", "age", "occupation", "zip", ]

data[sparse_features] = data[sparse_features].astype(str)

target = ['rating']

# 1.Use hashing encoding on the fly for sparse features,and process sequence features

genres_list = list(map(lambda x: x.split('|'), data['genres'].values))

genres_length = np.array(list(map(len, genres_list)))

max_len = max(genres_length)

# Notice : padding=`post`

genres_list = pad_sequences(genres_list, maxlen=max_len, padding='post', dtype=object, value=0).astype(str)

# 2.set hashing space for each sparse field and generate feature config for sequence feature

fixlen_feature_columns = [SparseFeat(feat, data[feat].nunique() * 5, embedding_dim=4, use_hash=True,

vocabulary_path='./movielens_age_vocabulary.csv' if feat == 'age' else None,

dtype='string')

for feat in sparse_features]

varlen_feature_columns = [

VarLenSparseFeat(SparseFeat('genres', vocabulary_size=100, embedding_dim=4,

use_hash=True, dtype="string"),

maxlen=max_len, combiner='mean',

)] # Notice : value 0 is for padding for sequence input feature

linear_feature_columns = fixlen_feature_columns + varlen_feature_columns

dnn_feature_columns = fixlen_feature_columns + varlen_feature_columns

feature_names = get_feature_names(linear_feature_columns + dnn_feature_columns)

# 3.generate input data for model

model_input = {name: data[name] for name in feature_names}

model_input['genres'] = genres_list

# 4.Define Model,compile and train

model = DeepFM(linear_feature_columns, dnn_feature_columns, task='regression')

model.compile("adam", "mse", metrics=['mse'], )

if not hasattr(tf, 'version') or tf.version.VERSION < '2.0.0':

with tf.Session() as sess:

sess.run(tf.tables_initializer())

history = model.fit(model_input, data[target].values,

batch_size=256, epochs=10, verbose=2, validation_split=0.2, )

else:

history = model.fit(model_input, data[target].values,

batch_size=256, epochs=10, verbose=2, validation_split=0.2, )

Estimator with TFRecord: Classification Criteo¶

This example shows how to use DeepFMEstimator to solve a simple binary classification task. You can get the demo

data criteo_sample.tr.tfrecords

and criteo_sample.te.tfrecords

and run the following codes.

import tensorflow as tf

from deepctr.estimator import DeepFMEstimator

from deepctr.estimator.inputs import input_fn_tfrecord

if __name__ == "__main__":

# 1.generate feature_column for linear part and dnn part

sparse_features = ['C' + str(i) for i in range(1, 27)]

dense_features = ['I' + str(i) for i in range(1, 14)]

dnn_feature_columns = []

linear_feature_columns = []

for i, feat in enumerate(sparse_features):

dnn_feature_columns.append(tf.feature_column.embedding_column(

tf.feature_column.categorical_column_with_identity(feat, 1000), 4))

linear_feature_columns.append(tf.feature_column.categorical_column_with_identity(feat, 1000))

for feat in dense_features:

dnn_feature_columns.append(tf.feature_column.numeric_column(feat))

linear_feature_columns.append(tf.feature_column.numeric_column(feat))

# 2.generate input data for model

feature_description = {k: tf.io.FixedLenFeature(dtype=tf.int64, shape=1) for k in sparse_features}

feature_description.update(

{k: tf.io.FixedLenFeature(dtype=tf.float32, shape=1) for k in dense_features})

feature_description['label'] = tf.io.FixedLenFeature(dtype=tf.float32, shape=1)

train_model_input = input_fn_tfrecord('./criteo_sample.tr.tfrecords', feature_description, 'label', batch_size=256,

num_epochs=1, shuffle_factor=10)

test_model_input = input_fn_tfrecord('./criteo_sample.te.tfrecords', feature_description, 'label',

batch_size=2 ** 14, num_epochs=1, shuffle_factor=0)

# 3.Define Model,train,predict and evaluate

model = DeepFMEstimator(linear_feature_columns, dnn_feature_columns, task='binary',

config=tf.estimator.RunConfig(tf_random_seed=2021))

model.train(train_model_input)

eval_result = model.evaluate(test_model_input)

print(eval_result)

Estimator with Pandas DataFrame: Classification Criteo¶

This example shows how to use DeepFMEstimator to solve a simple binary classification task. You can get the demo

data criteo_sample.txt

and run the following codes.

import pandas as pd

import tensorflow as tf

from sklearn.metrics import log_loss, roc_auc_score

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import LabelEncoder, MinMaxScaler

from deepctr.estimator import DeepFMEstimator

from deepctr.estimator.inputs import input_fn_pandas

if __name__ == "__main__":

data = pd.read_csv('./criteo_sample.txt')

sparse_features = ['C' + str(i) for i in range(1, 27)]

dense_features = ['I' + str(i) for i in range(1, 14)]

data[sparse_features] = data[sparse_features].fillna('-1', )

data[dense_features] = data[dense_features].fillna(0, )

target = ['label']

# 1.Label Encoding for sparse features,and do simple Transformation for dense features

for feat in sparse_features:

lbe = LabelEncoder()

data[feat] = lbe.fit_transform(data[feat])

mms = MinMaxScaler(feature_range=(0, 1))

data[dense_features] = mms.fit_transform(data[dense_features])

# 2.count #unique features for each sparse field,and record dense feature field name

dnn_feature_columns = []

linear_feature_columns = []

for i, feat in enumerate(sparse_features):

dnn_feature_columns.append(tf.feature_column.embedding_column(

tf.feature_column.categorical_column_with_identity(feat, data[feat].max() + 1), 4))

linear_feature_columns.append(tf.feature_column.categorical_column_with_identity(feat, data[feat].max() + 1))

for feat in dense_features:

dnn_feature_columns.append(tf.feature_column.numeric_column(feat))

linear_feature_columns.append(tf.feature_column.numeric_column(feat))

# 3.generate input data for model

train, test = train_test_split(data, test_size=0.2, random_state=2021)

# Not setting default value for continuous feature. filled with mean.

train_model_input = input_fn_pandas(train, sparse_features + dense_features, 'label', shuffle=True)

test_model_input = input_fn_pandas(test, sparse_features + dense_features, None, shuffle=False)

# 4.Define Model,train,predict and evaluate

model = DeepFMEstimator(linear_feature_columns, dnn_feature_columns, task='binary',

config=tf.estimator.RunConfig(tf_random_seed=2021))

model.train(train_model_input)

pred_ans_iter = model.predict(test_model_input)

pred_ans = list(map(lambda x: x['pred'], pred_ans_iter))

#

print("test LogLoss", round(log_loss(test[target].values, pred_ans), 4))

print("test AUC", round(roc_auc_score(test[target].values, pred_ans), 4))

MultiTask Learning:MMOE¶

The UCI census-income dataset is extracted from the 1994 census database. It contains 299,285 instances of demographic information of American adults. There are 40 features in total. We construct a multi-task learning problem from this dataset by setting some of the features as prediction targets :

Task 1: Predict whether the income exceeds $50K;

Task 2: Predict whether this person’s marital status is never married.

This example shows how to use MMOE to solve a multi task learning problem. You can get the demo

data census-income.sample and run

the following codes.

import pandas as pd

from sklearn.metrics import roc_auc_score

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import LabelEncoder, MinMaxScaler

from deepctr.feature_column import SparseFeat, DenseFeat, get_feature_names

from deepctr.models import MMOE

if __name__ == "__main__":

column_names = ['age', 'class_worker', 'det_ind_code', 'det_occ_code', 'education', 'wage_per_hour', 'hs_college',

'marital_stat', 'major_ind_code', 'major_occ_code', 'race', 'hisp_origin', 'sex', 'union_member',

'unemp_reason', 'full_or_part_emp', 'capital_gains', 'capital_losses', 'stock_dividends',

'tax_filer_stat', 'region_prev_res', 'state_prev_res', 'det_hh_fam_stat', 'det_hh_summ',

'instance_weight', 'mig_chg_msa', 'mig_chg_reg', 'mig_move_reg', 'mig_same', 'mig_prev_sunbelt',

'num_emp', 'fam_under_18', 'country_father', 'country_mother', 'country_self', 'citizenship',

'own_or_self', 'vet_question', 'vet_benefits', 'weeks_worked', 'year', 'income_50k']

data = pd.read_csv('./census-income.sample', header=None, names=column_names)

data['label_income'] = data['income_50k'].map({' - 50000.': 0, ' 50000+.': 1})

data['label_marital'] = data['marital_stat'].apply(lambda x: 1 if x == ' Never married' else 0)

data.drop(labels=['income_50k', 'marital_stat'], axis=1, inplace=True)

columns = data.columns.values.tolist()

sparse_features = ['class_worker', 'det_ind_code', 'det_occ_code', 'education', 'hs_college', 'major_ind_code',

'major_occ_code', 'race', 'hisp_origin', 'sex', 'union_member', 'unemp_reason',

'full_or_part_emp', 'tax_filer_stat', 'region_prev_res', 'state_prev_res', 'det_hh_fam_stat',

'det_hh_summ', 'mig_chg_msa', 'mig_chg_reg', 'mig_move_reg', 'mig_same', 'mig_prev_sunbelt',

'fam_under_18', 'country_father', 'country_mother', 'country_self', 'citizenship',

'vet_question']

dense_features = [col for col in columns if

col not in sparse_features and col not in ['label_income', 'label_marital']]

data[sparse_features] = data[sparse_features].fillna('-1', )

data[dense_features] = data[dense_features].fillna(0, )

mms = MinMaxScaler(feature_range=(0, 1))

data[dense_features] = mms.fit_transform(data[dense_features])

for feat in sparse_features:

lbe = LabelEncoder()

data[feat] = lbe.fit_transform(data[feat])

fixlen_feature_columns = [SparseFeat(feat, data[feat].max() + 1, embedding_dim=4) for feat in sparse_features]

+ [DenseFeat(feat, 1, ) for feat in dense_features]

dnn_feature_columns = fixlen_feature_columns

linear_feature_columns = fixlen_feature_columns

feature_names = get_feature_names(linear_feature_columns + dnn_feature_columns)

# 3.generate input data for model

train, test = train_test_split(data, test_size=0.2, random_state=2020)

train_model_input = {name: train[name] for name in feature_names}

test_model_input = {name: test[name] for name in feature_names}

# 4.Define Model,train,predict and evaluate

model = MMOE(dnn_feature_columns, tower_dnn_hidden_units=[], task_types=['binary', 'binary'],

task_names=['label_income', 'label_marital'])

model.compile("adam", loss=["binary_crossentropy", "binary_crossentropy"],

metrics=['binary_crossentropy'], )

history = model.fit(train_model_input, [train['label_income'].values, train['label_marital'].values],

batch_size=256, epochs=10, verbose=2, validation_split=0.2)

pred_ans = model.predict(test_model_input, batch_size=256)

print("test income AUC", round(roc_auc_score(test['label_income'], pred_ans[0]), 4))

print("test marital AUC", round(roc_auc_score(test['label_marital'], pred_ans[1]), 4))